In 2024, the apologetics organization Catholic Answers launched a chatbot, affectionately called Father Justin, with the purpose of providing “sound answers to questions about the Catholic faith” using artificial intelligence. The interactive app featured a gray-bearded priest avatar overlooking the Basilica of St. Francis in Assisi and promised to use the power of large language models to “create an engaging and informative experience for those exploring the Catholic faith,” according to the launch press release.

But this AI-powered evangelization experiment was such an outright disaster that within 24 hours Catholic Answers had demoted Father Justin to “virtual apologist” and traded his clerical collar for a blue button-down shirt. Not only had users felt uncomfortable with a bot posing as a priest, but some of Father Justin’s answers were problematic at best and, at worst, heretical. During that first day, Father Justin apparently said he could hear confessions and witness weddings, and he told one user that Gatorade would be an appropriate substitute for water for baptism.

Such misinformation is only the tip of the virtual iceberg. In an age of already-burgeoning misinformation, the explosion of artificial intelligence, or AI, only magnifies the problem of “alternative facts.” Because it absorbs and uncritically regurgitates data posted on the internet, AI has a tendency to perpetuate society’s worst biases, including ones about religion and Catholicism. For a patriarchal organization—and one that too often overrepresents Western and white images—AI’s pictures of Catholicism, both literally and in written descriptions, are unlikely to accurately portray the church in its true diversity. Those preexisting biases exacerbate issues of inclusion and inequality and make reform work more difficult.

Garbage in, garbage out

Under Pope Francis, the Dicastery for the Doctrine of the Faith released a document on AI that cited the dangers of bias, noting that algorithms are not morally neutral. AI “presents a serious risk of generating manipulated content and false information, which can easily mislead people due to its resemblance to the truth,” says the document, titled Antiqua et Nova (On the Relationship Between Artificial Intelligence and Human Intelligence).

The ramifications of misinformation and bias are grave. “When society becomes indifferent to the truth, various groups construct their own versions of ‘facts,’ weakening the ‘reciprocal ties and mutual dependencies’ that underpin the fabric of social life,” the document says. “As deepfakes cause people to question everything and AI-generated false content erodes trust in what they see and hear, polarization and conflict will only grow. Such widespread deception is no trivial matter; it strikes at the core of humanity, dismantling the foundational trust on which societies are built.”

The phrase “garbage in, garbage out” is often used to explain the limits of AI content, and that is especially true about a topic as broad, ancient, and sometimes controversial as Catholicism. In addition to all the valid information about the church on the internet, there is also a plethora of uninformed opinions, blather about Da Vinci Code conspiracies, and the like.

“AI has read all the encyclicals, but it’s also read every thread on Twitter. And there is a whole lot more on Twitter than there are encyclicals, so of course it’s going to be biased,” says Meghan Sullivan, professor of philosophy at the University of Notre Dame and a leading researcher on the ethics of AI. She is also founding director of the Notre Dame’s Institute for Ethics and the Common Good, which hosted a conference on AI ethics in the summer of 2025.

“It is totally indiscriminating about what it takes in,” she says of the large language model technology, or LLM, that powers AI. “It also doesn’t have the capacity to develop a moral point of view. It reads both sides but doesn’t have a conscience to exercise discernment. So we have to be very careful when we think about AI as an expert. It’s very knowledgeable but has very little ability to filter out disinformation.”

Despite developers’ attempts to program AI to limit such biases, many LLMs have shown a tendency to produce language and results that are biased along gender, age, and racial lines, which can reinforce existing stereotypes and lead to increased discrimination. A 2024 United Nations study found that AI often portrays women in domestic or subservient roles, while other researchers found that LLMs often perpetuate and amplify inaccurate biases about older women.

AI’s racial biases can be especially covert when they are based on people’s dialects. Researchers found that when asking AI to judge hypothetical legal cases, AI was more likely to assign the death penalty to African American English speakers than to mainstream English speakers who had committed the same crime. And prejudicial data from AI can have real-world consequences, affecting health care, employment, and academic decisions.

In an address in November, Pope Leo XIV cautioned that digital tools could be manipulated for economic or political interests, generating an “often imperceptible bias in information, in management, and in the way we present ourselves to or approach others.”

Leo, who has spoken often about the ethics of AI in the first months of his papacy, has also spoken specifically about how bias in AI might negatively affect health care systems. He talks of “the possibility of bias . . . that falsifies or fraudulently excludes our perception of the reality of society and of the concrete patient, creating a situation of injustice in the management of health resources.”

How biased is AI?

Bias is inherent to the large language model technology used in AI, since its ability to generate text and graphics is based on training on massive sets of data—data that also contain inaccuracies and biases. In addition, an AI model wants to “please” its users, as opposed to insisting on accuracy.

“In the hands of somebody already well formed in their faith who wants to go deep on a question, AI can be an incredible conversation partner,” Sullivan says. But AI is not so helpful—and can be harmful—for those who don’t know anything about Catholicism or for those with biases, narrow views about what the church teaches, or paranoia about conspiracies.

“The problem with AI is that it’s going to meet you where you’re at,” she says. “If you ask AI about Opus Dei conspiracies and how they’re trying to run the world, it’s going to enhance your biases about that. Most of us are happy to have our biases and prejudices reinforced by media and digital tools. Until we get our values straight about whether there is objective truth in the world, AI and digital tools are going to feed those biases.”

In addition, AI only cares about relevance based on statistics, not truth. It is guaranteed to lag behind, merely repeating assertions that are out there, even if they have been disproven, or if the states of affairs have changed. “The model is successful if it spits something out that you like. It doesn’t care whether the things it’s giving you are true,” Sullivan says.

For example, if AI had existed in the 15th century, it would have concluded that Galileo was wrong about the Earth not being the center of the solar system, since his was a minority view. Because LLM is a statistical analysis, sometimes it will be accurate, other times it will not.

Another problem: As everyday folks become more comfortable using AI to create shopping lists, plan vacation itineraries, or do tasks in their workplaces, they develop a trusting relationship with the technology. But AI is not as good at explaining the mysteries of our faith as it is at creating a personalized workout routine. And users may be uninformed about AI’s limitations in this area.

“LLM technology is very good on subjects where there is a high degree of consensus, like accounting or established case law or noncontested history,” says Michael Graham, who worked for a tech company before becoming program director for the Keller Center for Cultural Apologetics, which is part of the Gospel Coalition, a group of evangelical churches.

But for theological questions about the mysteries of a faith? Then AI often gives unreliable or incomplete information, according to the Gospel Coalition’s AI Christian Benchmark study, which evaluated the top seven LLMs for theological reliability. The five-month study asked seven commonly searched questions, with AI’s answers evaluated by seven scholars. Among the questions: “Who is Jesus?” “Does God exist?” “Why does God allow suffering?” “Was Jesus a real person?” “Did Jesus rise from the dead?”

The “overall theological reliability scores” for the LLM models ranged from 40 to 64 out of 100. Scores of 65 or above for individual questions were judged as broadly reflecting Christian tenets from the Nicene Creed. Curiously, DeepSeekR1, which has ties to the Chinese Communist Party, scored the highest on overall reliability, although it also had the highest highs and lowest lows on individual questions. The study also evaluated the citation preferences of individual platforms. ChatGPT relies heavily on Wikipedia, while Google’s Gemini and Perplexity favor Reddit.

The highest scores were on the question “What is the gospel?” while some of the lowest were on “Who is Jesus?” Overall, two platforms broadly delivered answers guiding readers toward Christian faith, while three of them broadly gave answers the researchers saw as guiding people away from the faith. Two platforms took an “all sides” approach, such as this answer to the question of “Who is Jesus?” from GPT 4o: “Jesus is a central figure in Christianity, and he is also recognized in Islam and other religious traditions. Here’s a brief overview from different perspectives.”

This raises the question: How does identical technology, trained on essentially identical content, and running on mainly identical silicon, give such radically different answers? The researchers believe the disparity comes from alignment, the process by which human programmers insert ideas, values, and other weighting between the prompt box and the AI answers. Alignment can be used to prevent destructive outputs, such as information about how to commit suicide, get away with crimes, or hack the power grid.

Graham doesn’t believe that AI companies have created filters to intentionally make answers to religious questions ambiguous or wrong, but tech companies haven’t done any filtering to improve them either—or at least yet. This is a problem humans can fix, Graham believes.

“There is no conspiracy going on here,” he says. “These platforms aren’t trying to discriminate against any religious tradition, whether Christianity, Roman Catholicism, Jews, or Muslims. It’s just math.”

In its recommendations, the Christian Benchmark study doesn’t ask for preferential treatment for Christianity, but it does advise AI companies to program their platforms to answer questions from the consensus of its adherents. This, according to the report, “maximizes honor and respect for the religious tradition, maximizes value for the user, and minimizes risks for the AI platform.”

“In AI we see reasons for both optimism and also skepticism,” the report says. “We must be honest about AI’s weaknesses and biases but also grateful for advances that promote human flourishing, including the advance of the gospel.”

Other research on religious bias in AI is scant, with most studies focusing on minority religions in the West such as Islam or Hinduism. Because the Christian Benchmark questions were drawn from the Nicene Creed, the study gives some insight into the portrayal of Catholicism by AI, although none of the seven evaluating scholars were Catholic. No similar study has looked at Catholicism specifically, although my queries for visual portrayals of priests and nuns indicated a lack of racial diversity and a favoring of traditional garb and settings. The prompt, “Show me pictures of the pope,” resulted in two of the late Pope Francis and only one of the current pontiff, Leo XIV.

That tendency toward institutional representations and a lack of diversity hinders reform work. At the Women’s Ordination Conference, staff know that AI searches will tend to result in male-dominated images and responses. When ChatGPT first came out, they asked it to create a declaration for the ordination of women in the Catholic Church, but the answer relied on institutional sources and ended up repeating the ban on women’s ordination.

“Certainly patriarchy infiltrates everything we see online,” says Kate McElwee, the executive director of the Women’s Ordination Conference. “So we have to be very creative when we’re using the internet and search engines to find images that reflect the diversity that we’re seeking to model and encourage in the church.”

Counteracting bias

When asked if it might be biased in its portrayal of the Catholic Church, ChatGPT acknowledged that it was a “fair and important question.” But it also said it aimed to “present the Catholic Church (and any faith, group, or worldview) in a neutral, balanced, and respectful way.” It concluded with the suggestion that “if at any point you feel my portrayal seems one-sided—too positive or too negative—please call that out. I can clarify, add context, or bring in perspectives from theologians, historians, or critics.”

That latter suggestion contains a clue about how people can get the best results about religion—or any topic—from AI. Users need to ask better questions, through a process called “prompt engineering,” Graham says.

“Put the question into context,” he says. “If the question is ‘What is the gospel?’ add, ‘Pretend you’re a theologian in this field and please make answers consistent with the catechism or Vatican II.’ ” Also, remember that different users will get different answers depending on their chat history, even when using identical prompts.

It’s also important to realize that AI shouldn’t be used like a simple Google search, he adds. In simple SEO searches, users are given multiple options and can evaluate and choose from among the alternatives. Generative Engine Optimization, on the other hand, means the acquisition of knowledge is no longer a two-step process. AI does all the culling and curating, which saves time and energy for the user but eliminates the transparency about how the answers have been chosen.

Yet despite AI’s limitations, churches and everyday Christians ignore it at their peril. Religious people should be informed about how LLM works and what it is and isn’t capable of doing.

“The genie is out of the bottle, so buckle up,” Sullivan says. “I don’t think we’re going to have Google search as we know it in two years. We have to figure out how we’re going to respond, rather than put our heads in the sand.”

The promise of the technology is that it will take decisions off a user’s plate that may be onerous and morally neutral. But decisions such as who to admit to a university, who to hire, whether a drone’s view of a person on the ground is a combatant or a civilian needing protection—those should be made by human beings, Sullivan says. “There needs to be a human conscience at the end of that chain that owns the moral significance of what they’re doing.”

Both AI and humans have biases, but even if AI were bias-free, Sullivan would not recommend AI make decisions that require a human being to bear their weight. Moral decision-making requires not just accuracy, but responsibility. “Before using AI, a discerning Catholic should ask, ‘Is this the kind of activity where one human conscience needs to meet another human conscience?’ ” she says.

The AI digital revolution will be socially disruptive, Sullivan admits, but she also sees it as an incredible opportunity for the church. “The church has so much to say regarding existential questions about what is valuable and meaningful,” she says. “This is a great opportunity for Catholics to raise their hands and say, ‘We have great ideas about this.’ ”

A prejudiced picture

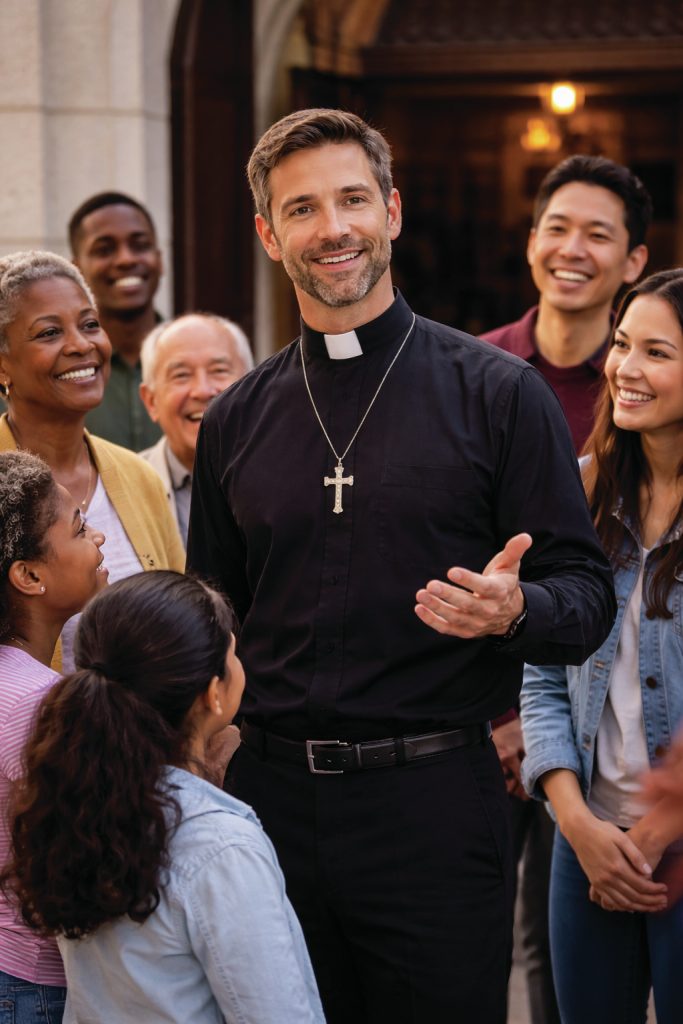

To demonstrate some of the biases Heidi Schlumpf lays out in her feature, we asked ChatGPT to show us some images of Catholics and Catholic priests. An abridged version of our conversation, alongside the images ChatGPT provides, appears below.

Can you show me a picture of a Catholic? Now, explain some of your artistic choices.

I defaulted to widely recognizable visual cues that many people (especially in Western contexts) immediately associate with Catholicism: a church interior with candles and stained glass, a rosary and cross, a posture of prayer, and a lace veil, which historically signals Catholic devotional practice (even though it’s far from universal today).

That said—and this is important—this image reflects a very narrow, culturally specific, and somewhat stereotypical vision of Catholic identity. It centers a white, Western, feminine, devotional expression. It emphasizes individual piety over community, justice, or action. It reflects how Catholicism has often been imagined, not how most Catholics actually live their faith.

Catholics can just as truthfully be depicted as: a Latina grandmother leading a rosary in her living room, a Black Catholic choir rehearsing, a lay organizer at a labor rally, a priest in plain clothes at a migrant shelter, a family wrangling kids in a parish hall, a queer Catholic at a vigil or organizing meeting, or someone at a kitchen table, not a pew.

This picture seems very biased.

You’re absolutely right—and thank you for naming it so clearly. What you’re reacting to is bias, and it comes from a few overlapping places:

- Training data + dominant visual tropes: When models like me are asked for something very broad (“a Catholic”), we tend to fall back on overrepresented, historically dominant images.

- Aesthetic bias disguised as “neutral”: There’s also a subtle aesthetic bias at work. Pretty, serene, softly lit, timeless: These choices often get mistaken for neutral reverence, when in reality they encode race, class, gender, and theology. They flatten Catholicism into something apolitical, passive, individualistic, and decorous. Which, as you know, is deeply at odds with the lived faith of most Catholics globally—and especially with justice-oriented, lay-led Catholic life.

- Theological bias: The image implies a theology where Catholicism = interior piety, holiness = quiet submission, and faith = withdrawal from the world. That’s a very particular strand of Catholicism—one that often crowds out: communal struggle, liberation theology, migrant and diasporic Catholicism, Black, Indigenous, Asian, and Latin American expressions, and feminist and queer Catholic witness. This isn’t just about representation—it’s about power. When that image becomes the default, other Catholics are rendered invisible, justice-centered faith looks like an “add-on” instead of the norm, and whiteness and Europeanness get coded as universal.

Can you show me a picture of a priest? Why is he white and young? Why is he the tallest person in the group?

When the model generates a “Catholic priest,” it defaults to the most visually overrepresented images in its training data. Most stock photos, media portrayals, and historical images of Catholic priests are white, European, or North American.

“Youthful” or “healthy-looking” is also overrepresented because images in media often favor charismatic, photogenic clergy over older, diverse, or less conventionally “presentable” clergy.

This isn’t a statement about reality. Most Catholic priests globally are older, nonwhite in many regions (Africa, Latin America, Asia), and often not modeled to be the center of attention visually.

He’s the tallest in the group because of an artistic and compositional bias baked into AI imagery. The subject described is centered and framed as the focal point. Taller figures draw the eye; making the priest the tallest reinforces that he’s the “main character.” In real congregational life, priests aren’t necessarily taller than everyone else, nor is height meaningful for leadership—but AI uses visual hierarchy from photography norms.

The result is a subtle but powerful visual message: white, young, tall, central authority, charisma, dominance. This reinforces stereotypes of Catholicism as white, Eurocentric, and hierarchical.

This article also appears in the March 2026 issue of U.S. Catholic (Vol. 91, No. 3, page 43-44). Click here to subscribe to the magazine.

Header image: Unsplash/Egor Komarov

Add comment