The latest Marvel blockbuster, Avengers: Age of Ultron, predictably packed them in when it opened in May. In this latest chapter of the superhero series, the Avengers confront one of their most formidable adversaries: Ultron, a Stark Industry-created Franken-bot running amok on a global scale. I haven’t seen the film yet (though with four young children, I’m thinking that is going to be remedied soon), but I am going to go out on a limb and speculate that Iron Man, Captain America, and the other Avengers find a way to subdue this menace from the near future. Maybe we mere mortals should be taking notes.

It turns out other visionaries besides Marvel writer Stan Lee—Stephen Hawking and Bill Gates among them—are likewise contemplating a future haunted by sentient-bots and autonomous machines, and they do not like what they are foreseeing. Tesla boss Elon Musk worries that putting artificial intelligence to work managing human affairs amounts to “summoning the demon.” We may think we can control the devil we call up, Musk explains, but he warns A.I. is “potentially more dangerous than nukes.”

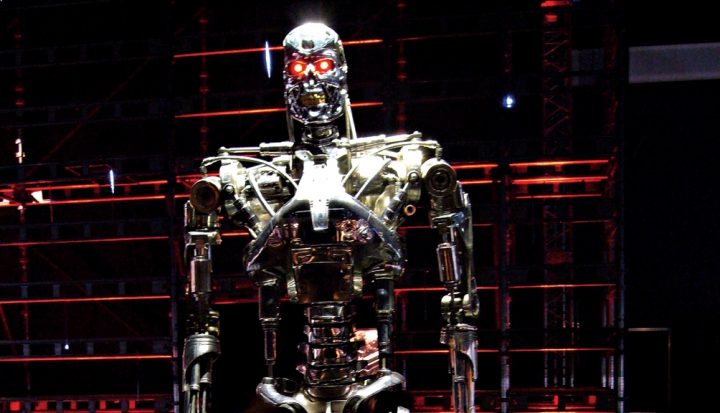

What are these carbon-based neural-organics so worried about? Just ask anyone who has watched James Cameron’s Terminator series. When the machines humans create to serve them achieve a silicon consciousness of their own, doomsday appears to be the consensus fictional call. Reviewing the crimes of humanity, the conscious machines quickly decide that earth would be better off without us. Humans have been misclassified as mammals, self-aware software Agent Smith informs Neo in The Matrix, when in fact they are a “virus.”

We don’t quite have to worry about a machine-driven apocalypse—Bill Gates thinks that possibility is still a few decades off. But while we’re waiting for Sarah Connor’s “Judgment Day,” we might want to focus greater ethical and practical attention on the near autonomous machines we’ve already put to work—most often within the vast technological capacity of the U.S. military.

The growing drone force the United States deploys in finding, tracking, and sometimes liquidating its enemies—and anyone unfortunate enough to be standing near them—has become the target of persistent criticism among ethicists and international law experts. But other battlefield robots are no longer science fiction and may soon augment or replace their human counterparts.

Last year, the Vatican joined a handful of nations calling for a preemptive ban on what are known as “fully autonomous weapons.” These metallic soldiers—which Terminator fans may find resemble Cyber Research Systems T-1 units—are being developed by the United States, Russia, and other nations that bankroll such high-tech military development. These machines are military stepping stones to the A.I. that worries Hawking, Gates, and others, and would make lethal, real-time decisions on the battlefield without human guidance.

Speaking at a meeting on lethal autonomous weapon systems in April, Archbishop Silvano Tomasi, the Holy See’s Permanent Observer to the United Nations in Geneva, urged the prohibition of robotic weapons that keep humans out of the loop on lethal decisions. Tomasi said out-of-control defense spending—such as the excess an entirely new arms race in autonomous weapons might provoke—is always a moral affront in a world of scarcity and deprivation. But the research on these robot weapons opens up new areas of concern.

“All wars are a step backwards from human dignity,” Tomasi said, but a world of neo-conflict that is bereft of any “encounter with the face of another . . . one of the fundamental experiences that awaken moral consciousness and responsibility” is especially worrying. Having delegated powers of life and death to machines, humans could find themselves “the slaves of their own inventions.”

This column appeared in the July 2015 issue of U.S. Catholic (Vol. 80, No. 7, page 39).

Image: Flickr cc via Dick Thomas Johnson